Every MLOps Engineer should Read this

Improving your MLOps Platform efficiency by 25x by DragonflyDB

Hello Everyone

Welcome to your AKVAverse, I’m Abhishek Veeramalla, aka the AKVAman, your guide for Cloud, DevOps, and AI.

I recently shared insights from a real-life use case where we were building a machine learning platform, specifically hosting models related to fraud detection. We encountered challenges achieving the desired efficiency during the serving phase due to issues like training-serving skew and performance bottlenecks.

Today, I want to break down the MLOps Game Changer we implemented: migrating our Feast feature store’s online component from Redis to DragonflyDB. This migration achieved significant performance improvements in terms of both model inference and overall platform efficiency

Let’s Begin

Addressing Training-Serving Skew

During the training phase, features are typically queried and extracted from a data warehouse containing historical data. For example, a model predicting if a transaction is fraudulent might extract a feature showing the current timestamp and the last 24 hours of transactions for a user. However, in the serving phase, features must be extracted very quickly from an in-memory data store using an API call.

The difference in extraction methodology, writing a query to a data warehouse versus making an API call to an in-memory store, can lead to the feature definition becoming inconsistent, causing prediction accuracy to drop. Beyond consistency, performance is vital; if a model takes too long (e.g., an hour or even two minutes) to compute a prediction, the result is too late for the end-user. So this is the reason we use Feast

Solving Consistency with Feast

To maintain feature consistency and ensure performance, we opted for a feature store solution

Feast. Feast is an open-source, horizontally scalable feature store designed to address these problems.

Feast maintains feature consistency by requiring engineers to define the features within a Feast file, which then acts as the single source of truth for both model training and serving.

Feast operates using two primary components:

Offline Store: During the training phase, Feast connects with data warehouses (such as DuckDB, a popular choice) to extract features according to the single feature definition.

Online Store: During the real-time serving phase, Feast connects with an in-memory data store (such as Redis or DragonflyDB) to rapidly extract features based on the same definition.

The Bottleneck: Redis at Scale

Initially, we implemented Redis as the online store, following common practices. While Redis performed very well with a limited volume of transactions (in the hundreds or thousands) and a limited number of customers, it quickly became a bottleneck as we scaled the platform.

To cope with increased load, we had to move from a single Redis instance to an entire Redis cluster. Even with clustering, Redis’s inherent single-threaded architecture limited its ability to compute multiple concurrent transactions, hindering throughput. Attempts at implementing sharding and horizontal scaling failed to fully utilize available resources, leading the engineering team to spend a considerable amount of time simply maintaining the Redis cluster environment

The Performance Breakthrough: DragonflyDB

Facing maintenance overhead and performance limitations, we decided to migrate the online store to DragonflyDB.

DragonflyDB is a drop-in Redis replacement, meaning the migration required minimal changes; we did not have to change code, APIs, or the existing command-line interface (CLI) used to connect to Redis

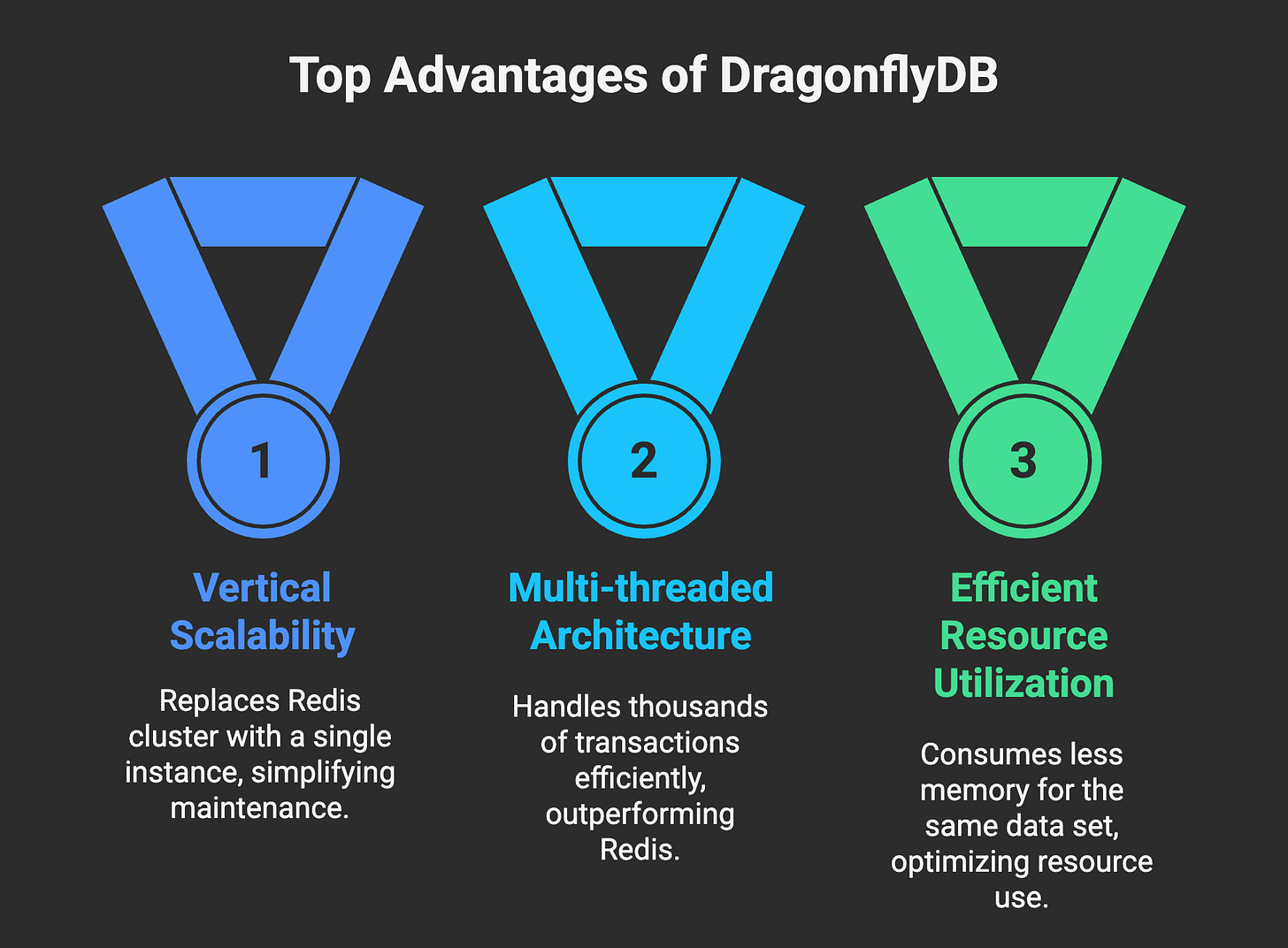

Key advantages of DragonflyDB

Vertical Scalability: DragonflyDB is vertically scalable, meaning a single instance replaced my entire Redis cluster. This drastically reduced maintenance complexity.

Multi-threaded Architecture: Unlike the single-threaded nature of Redis, DragonflyDB features a multi-threaded architecture, allowing it to handle thousands of transactions efficiently. Internal benchmarks noted that a single DragonflyDB instance could run 25 times more transactions compared to Redis.

Efficient Resource Utilization: DragonflyDB is built with a modern architecture for an in-memory data store, consuming significantly less memory for the same data set.

This migration ultimately allowed Feast to extract features much more rapidly, drastically reducing the time taken for predictions and improving overall performance

Seamless Migration Demonstration

A video that will help you migrate your Feast feature store from Redis to DragonflyDB.

If you want a dedicated video of migration and already implemented on 100+ of MLOps Models, just like this post ❤️ and comment Link below. I will make sure it gets delivered straight to your inbox.A Thought to Leave You With

When scaling your MLOps platform, the architectural foundation of your online feature store, specifically its ability to handle vertical scaling and utilize multi-threaded processing, is paramount for maintaining both peak performance and operational simplicity, and I would strongly recommend using DragonflyDB as a Redis alternative.

Until next time, keep building, keep experimenting, and keep exploring your AKVAverse. 💙

Abhishek Veeramalla, aka the AKVAman

Link

Link